Tech & Leadership

What Slay the Spire 2 Taught Me About Shipping Software

I died to the Act 3 boss and they had 1 HP left. Even though I had the perfect deck, the perfect relic synergy, a block engine that had carried me through the first two acts without breaking a sweat.

And then the Test Subject went intangible, because it does that every other turn, and my attacks couldn’t kill it. It survived with one hit point, just one, and it killed me on the next turn.

I was frustrated, obviously, but I queued up another run right after because that’s just what you do. You die and you go again.

It got me thinking: Slay the Spire 2 is in Early Access right now and I’ve been playing it way more than I should. But the thing that’s kept me thinking isn’t the gameplay, it’s really how Mega Crit is building it in public, and their development process is something that we can all learn from.

The Beta Branch Mentality

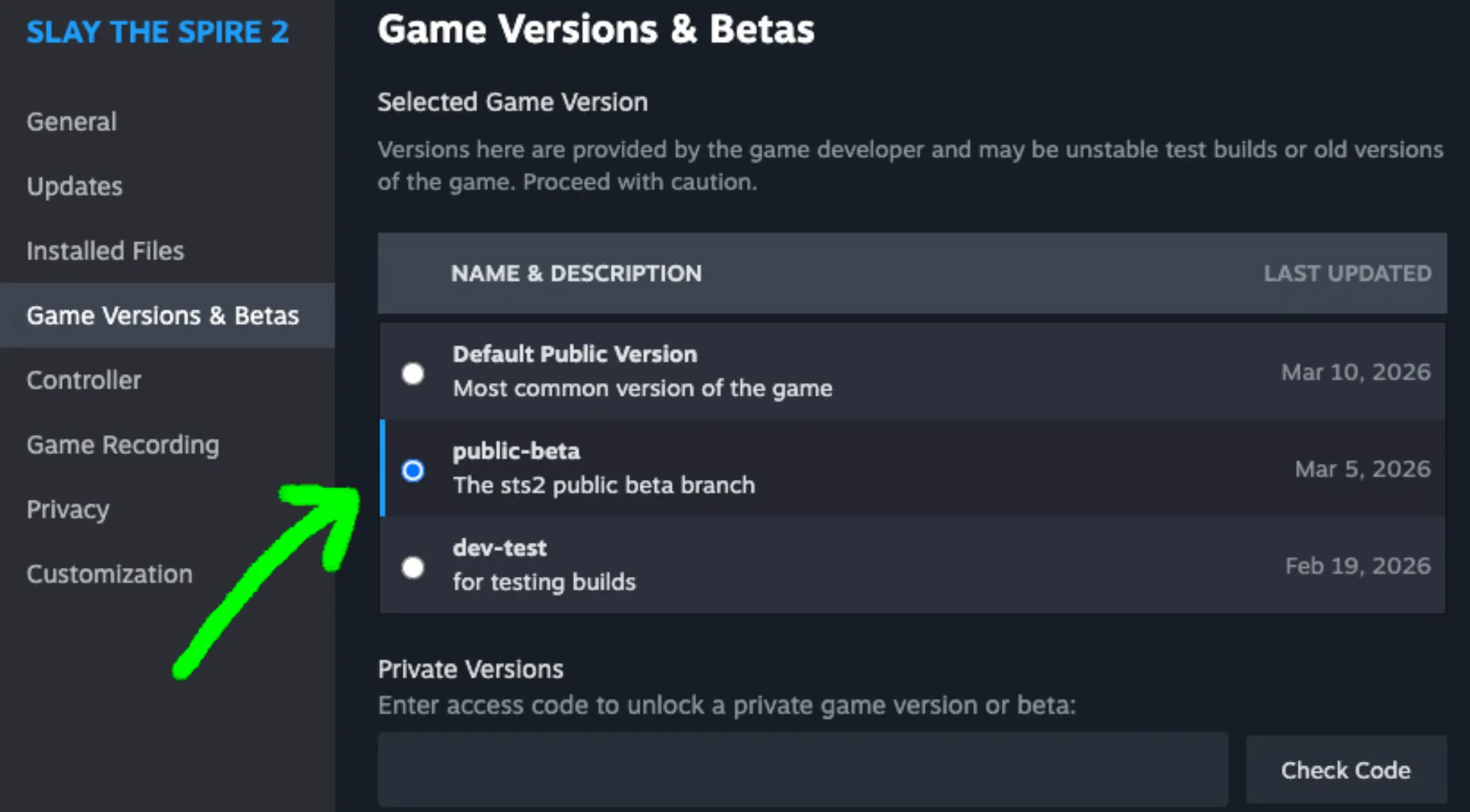

Mega Crit runs a public beta branch on Steam. If you opt in, you get patches days or sometimes weeks before they hit the main build. It’s intentional that these patches are rough: cards get completely reworked, some get removed, relics get updated, entire mechanics show up. They’re tested by potentially thousands of players, and then they might disappear in the very next beta update.

A lot of game studios don’t ship games this way. Honestly, most software engineering teams don’t either. But there’s something specific about how they’re framing it. They’re not saying “here’s our finished thing, tell us if it’s broken.” They’re saying “here’s what we’re trying, here’s a changelog, try it out.”

This type of framing changes the whole dynamic because most software teams are terrified of showing unfinished work. It feels vulnerable, and there’s always a risk that people are going to judge that half-baked version and lose confidence in the team.

Mega Crit figured out that this is Early Access, they have a huge player base, and that helps a lot. Their first game was really successful. If you give people a safe, opt-in space to see the messy version, you get feedback you’d never get from just a player survey. Players report how things feel, and they do it very loudly, especially if they feel strongly about the game.

Design Goals as a North Star

Early Access games get a flood of feedback. Every player has an opinion about balance, about which cards are too strong, which relics feel bad, which boss is unfair, and the temptation is to react to all of it, to chase the loudest voices on Reddit or Discord.

But honestly, not all opinions are weighted equally or should be treated equally. Mega Crit doesn’t necessarily do that. They’ve talked openly about having pre-decided design goals for each character, each mechanic, each card archetype. So when feedback comes in, they measure it against those goals, not against popularity or upvotes.

This creates a clear hierarchy for how they tend to respond. There are three types of rework or adjustment:

-

Small tweaks, which are number adjustments. A card costs 2 energy instead of 1, a relic triggers every 4 combats instead of 3 (although I would argue energy adjustments are bigger than just a small tweak). These happen constantly on the beta branch and they’re basically knob turns.

-

Mid-level changes are structural but contained. Moving a card from uncommon to rare, changing when a mechanic unlocks, adjusting the cost curve of an entire archetype. These shift how the game feels without changing what it’s trying to be.

-

A full-on rework. This happens when a mechanic just isn’t meeting its intended design goal, not because players don’t like it, but because it’s not what Mega Crit designed it to do. A card can be unpopular but still be working as intended. On the other hand, a card can be very popular but failing the archetype’s purpose.

The balance between standing your ground and listening to player feedback is a delicate one. I’ve seen software teams cave to the loudest feedback in the room and ruin a feature, or just expand the scope right before it was about to ship. I’ve also seen teams dig in deep on a bad idea because they confuse stubbornness with conviction. Mega Crit seems to navigate this by always going back to the original intent, their design principles. “What were we trying to achieve?” is a much better question than “what are people saying about our game?”

From Users to Collaborators

There was a patch a while back that changed some fan-favorite cards pretty significantly. Prepared was a great example. Zero cost, draw one, discard one became completely different, it gave energy instead. The Steam reviews took a hit, players were upset, and there were constant YouTube videos: “Oh, Prepared is dead.” Everyone chimes in about what the devs should have done, could have done.

What Mega Crit does is what really sticks with me. They don’t always just immediately roll back the changes, and they definitely don’t go silent. They end up writing with each patch the most detailed patch notes explaining why each change was made. For example, they might explain that a card was creating a pattern where players could skip an entire phase of the game, and it could undermine the decision-making that they think makes the deck-building part so interesting.

Then they roll back some of the changes because the community had a point on a few of them.

It’s really about turning your player base into collaborators. Explaining the logic behind a change turns an angry player into a partner. I’ve seen this at work. When we ship something that disrupts a workflow that people rely on, the reaction is always worse when we just say “we improved X” with no other follow-up. But when we explain the trade-off, when we say “we know this changes your flow, here’s what we were solving for, here’s what we were thinking,” people start poking at the solution with you instead of just being mad about it. They want to understand our thought processes.

The Vibe Metric

And here’s the thing Mega Crit gets that I think a lot of data-driven product teams can potentially miss: a card can be, on paper, statistically balanced, but still feel really terrible to play. If the win rate is fine but nobody wants to pick the card, that’s a bug.

The analytics say it’s working, the players say it’s a chore, but the players are the ones who have to sit there and play it.

I’m thinking about this when I’m looking at product metrics. Usage numbers can tell me a feature is “working” while completely hiding that people don’t even use it, or they hate using it. Completion rate on a form can look great while every user is silently frustrated by the information architecture. Data tells me what’s happening, but vibe tells me how it feels. Sometimes that qualitative feedback is the thing that determines whether someone comes back or is likely to refer your product to someone else.

Mega Crit seems to weigh qualitative feedback just as heavily as their telemetry data. They watch streamers play. They read the long-form posts on Reddit. They understand that the feedback people are giving is messy, but once they normalize it and quantify it, they end up catching a lot of things that no dashboard ever will.

The thing I’m taking back to my own work is pretty simple: the best feedback loops are built on trust. Trust that your users can handle the messy version. Trust that explaining your reasoning makes people want to help instead of just complain. And finally, trust that “it doesn’t feel right,” that vibe, that something’s off, is also real data, even when the numbers say otherwise.

Now if you’ll excuse me, I gotta get to Ascension 10 with at least one character.